Introduction

Data Science related terminologies are buzzing around the internet. It marks the onset of the Industry 4.0 revolution. Data Science is a discipline that studies big data, uses modern tools and techniques for data mining and data analysis to find its applications across a wide variety of domains. For example, Google AI retinal scan collected retinal images from thousands of patients across South India. Finally, it analyzed the data to get information about the patients’ disposition to cardiovascular diseases in the next five years.

The statisticians, chemometricians and mathematicians have been breathing and living the data science concepts for years and may not be calling these terms with exactly the same buzz words. Perhaps the spread of the new terminologies is an outcome of massive online Data Science courses or the rebranding strategies of various companies that are trying to bank on the ‘Data Science’ capabilities. Whatever be the reason we need to prepare ourselves for the Industry 4.0 revolution, we should get familiar with these new terms. In this article, we broadly segregated the meaning of these terminologies based on interaction with various clients.

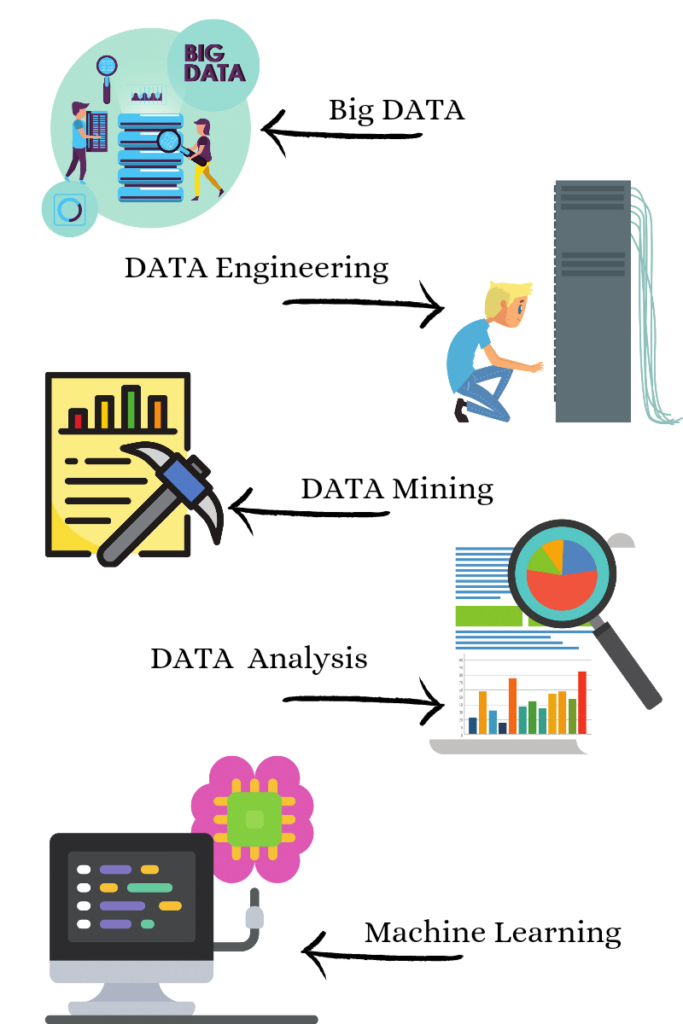

Big Data

Big data, put simply, refers to a collection of data from a wide variety of sources at a colossal scale. The data collected may be quantitative or qualitative, unknown or known, structured or unstructured and so on. As the scale of data collected is enormous, it is stored in specialized databases, known as big databases, that are developed using advanced computer programs such as SQL, MySQL etc. The collections of big data are also referred to as data warehouses. Many big databases are open source, e.g., Cassandra, HBase, MongoDB, Neo4j, CouchDB, OrientDB, Terrstore, etc. However, most of the popular databases are big-budgeted as well, e.g., Oracle, MySQL, Microsoft SQL, SAP HANA, etc. It is essential to state that the database choice is the fundamental and most critical step in the Data Science workflow. The storage requirements of the Big Data can range anywhere between MBs to TBs. Sometimes the data volume may be small, but the data complexity can be high. That is where data engineers pitch in to make things easy.

Data Engineering

The process of building a workflow to store the data in Big Data Warehouse and then extracting the relevant information is called Data Engineering.

Data Mining

Data mining is the process of extracting patterns from large datasets by combining methods from statistics and machine learning with database management. These techniques include association rule learning, cluster analysis, classification, and regression. Applications include mining customer data to determine segments most likely to respond to an offer, mining human resources data to identify characteristics of most successful employees or market basket analysis to model customers’ purchase behaviour.

Data Analysis

Data analysis is the exercise of analyzing, visualizing, and interpreting data to get relevant information that helps organizations make informed business decisions. It also involves data cleaning, outlier analysis, data preprocessing, and transformation to make data amenable to analysis. Data analysis is a very broad term that encompasses at least five different types of analyses. A data scientist chooses the most appropriate data analysis method based on the end goal of the analysis. Sometimes the same method of analysis can be used for the different end goal. Hence, another name may be used to call the technique despite involving the same mathematical and statistical concept. Therefore data analysis takes up various forms described briefly as below:

- Descriptive statistical analysis is the fundamental step for performing any data analysis. It is also known as summary statistics and gives an idea of the basic structural features of the data like measures of central tendency, dispersion, skewness, etc.

- Inferential statistical analysis is a type of statistical analysis that uses the information contained in a sampled data to make inferences about the corresponding larger population. It uses hypothesis testing of the data to draw statistically valid conclusions about the population. As the sampling process is always associated with an element of error, statistical analysis tools should also account for the sampling error so that a valid inference is drawn from the data.

- Chemometrics is the science of extracting and analyzing Physico-chemical information by using spectroscopic sensors and other material characterization instruments. Chemometrics is interdisciplinary, using methods frequently employed in core data-analytic disciplines such as multivariate statistics, applied mathematics, and computer science to address problems in chemistry, biochemistry, medicine, biology, food, agriculture and chemical engineering. Chemometrics generally utilizes information from spectrochemical measurements such as FTIR, NIR, Raman and other material characterization techniques to control product quality attributes. It is being used for building Process Analytical Technology tools.

- Predictive analysis models patterns in the big data to predict the likelihood of the future outcome. The models built are less likely to have 100% accuracy and are always associated with an intrinsic prediction variance. However, the data’s accuracy is refined each time more and more data is taken into account. Predictive analysis can be performed using linear regression, multiple linear regression, principal component analysis, principal component regression, partial least square regression, and linear discriminant analysis.

- Diagnostic analysis, as the name suggests, is used to investigate what caused something to happen. The diagnostic analysis uses the historical data to look for the answers that caused the same something in the past. It is more of an investigative type of data analysis. This involves four main steps: data discovery, drill down, data mining and correlations. Data discovery is the process of identifying similar sources of data that underwent the same sequence of events in the past. Data drill down is about focusing on a particular attribute of the data that interests us. This is followed by data mining activity that ends with looking for strong correlations in the data to lead us to the event’s cause. Diagnostic analysis can be performed using all techniques mentioned for predictive analysis. However, the end goal of the diagnostic analysis is only to identify the root cause to improve the process or product.

- Prescriptive analysis is the sum of all the data analysis techniques discussed above, but this form of analysis is more oriented towards making and influencing business decisions. Specifically, prescriptive analytics factors information about possible situations or scenarios, available resources, past performance, current performance and suggests a course of action or strategy. It can be used to make decisions on any time horizon, from immediate to long term.

Data Analytics

Data analytics is the sum of all the mentioned activities, right from big data, data engineering, data mining to the analysis of the data.

Machine Learning

Machine Learning creates new programs that can predict future events with little supervision by humans. Machine learning analytics is an advanced and automated form of data analytics. A Machine Learning algorithm is called Artificial Intelligence when the prediction accuracy is improved each time new data is added to it.

Machine learning algorithms that uses layered networks capable of unsupervised learning from the data are called Deep Learning algorithms. Examples of Deep Learning algorithms include Deep neural networks or Artificial Neural Networks inspired by the brain’s structure and function. These type of algorithms are designed to be analogous to human intelligence. The major difference between machine learning and deep learning is human supervision, i.e., deep learning algorithms are a completely unsupervised form of learning in contrast to machine learning algorithms.

Conclusion

The field of Data Science is more oriented towards the application side of the modern AI/ML tools that employ advanced algorithms to build predictive models that can transform the future of what we do and how we do it.

Curious to know about our machine learning solutions ?